Red teams simulate real-world attacks and ensure that the organization is capable enough to detect attacks and can take necessary action before it can cause any damage. The team responsible for identifying and responding to these attacks is the Blue team.

Simulation of real-world attacks is possible only through astute observation of the nature of attacks and investigating the intentions of the adversary. We need to know the threat agent and its purposes (reputational loss, data destruction, etc.) to enable better protection in place. Detection initiatives driven with no knowledge of the nature of attacks and adversary’s intention will be a wild goose chase.

Attack simulations can make an organization better prepared to handle real-world attacks and reduces the time to detect a breach.

Red teaming focuses on three attack surfaces; People (e.g., tricking a security guard into getting an entry, phishing an employee, etc.), Accessing physical locations (e.g., lock picking tricks, etc.) and Technology (e.g., application hacking, etc.).

Why your existing security tools & solutions aren’t useful

Organizations are focussed more on strengthening the perimeter defenses. A significant portion of the security budget gets invested in solutions such as Firewalls, WAF, IPD/IDS, Antivirus, and various other tools from OEMs. These solutions and tools are necessary; however, organizations also need to focus on addressing these scenarios:

- What if we are already breached?

- What actions can be taken, should we check all the devices for logs and check the defenses for alerts and rules?

- Are we safe if there are no clues, or are we missing something?

At the most, organizations can have a refined security incident and response policy that can be adhered to immediately.

Now the question is, how will we know if we got breached? According to research, it takes an organization 197 days to detect if they are breached. The organization which is breached will not have any idea how the adversary got inside, what the adversary is doing now, how it is moving laterally, how much is the spread, and what to do now.

Here are some of the most common defenses adopted by organizations and will help you get a better understanding of why they get crippled in detecting the adversaries even after having a lot of cyber armor.

- Antivirus: Adversaries use custom build tools, and these tools are tested against conventional industry-standard products to evade detection.

- IPS/IDS, Firewall, WAF: These solutions usually lack fine-tuning, and this helps the adversary to get in.

- VAPT program: VAPT is performed by pen testers, who depend on developers to fix the vulnerabilities. The focus of VAPT is to ensure the identified weaknesses get documented. Getting them fixed is a separate task. A VAPT program does not consider if the organization is already compromised or whether the adversaries are using zero-day exploits.

Red teaming – The way its done

A Red teaming simulation is executed in two phases.

- Phase 1: Breach into the organization from outside and simulate the damage.

- Phase 2: Try detecting the attacker who has conducted the breach and present inside the organization.

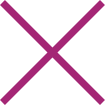

Let’s see the most common practices followed and how these practices are rendered ineffective by adversaries. David Bianco’s “pyramid of pain” defines how easy it is for an attacker to change the behavior of an attack.

- Hashes and IP addresses: Hashes, signatures, and IP addresses can easily be changed during the launch of an enterprise-wide attack. The use of different signatures, obfuscation techniques, and botnets makes it trivial activity. If the attack continues and the IP addresses are legitimate, can we afford to block the IP addresses risking the business impact?

- Domain Names: Attacks can change the name of a domain as well. The Necurs botnet could have used 6 million domain names in 25 months. How many can you block?

- Network and Host Artifacts: Segmentation, network, and host level controls can restrict the attack. However, there is always a workaround. These are annoying to change for an attacker but not a big challenge, whereas these are difficult to detect and monitor in large environments. E.g., Detecting a change in the registry entry.

- Tools: The use of tools can vary from using an open-source tool to developing custom tools.

- TTPs: This is the toughest challenge for an attacker. If we disable the password memory cache, lower the admin debug levels, then it becomes tough for an attacker to get the job done since we have acted on the behavior rather than the lower-level elements.

Getting started with Mitre ATT&CK framework

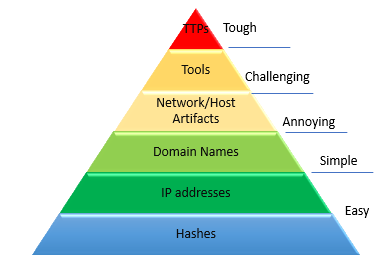

Mitre ATT&CK: (A)dversarial (T)actics (T)echniques (&) (C)ommon (K)nowledge

This framework describes how adversaries penetrate the network and then move laterally, escalate privileges, and evade your defenses. It looks at the problem from the perspective of an adversary, what goals they are trying to achieve, and the methods they use. By leveraging this framework, we can strengthen preparedness and stay ready for an adversary attack.

The above matrix provides a detailed dissection of an adversary behavior that organizations can use to develop a model that fits their environment and make them better prepared for an attack.

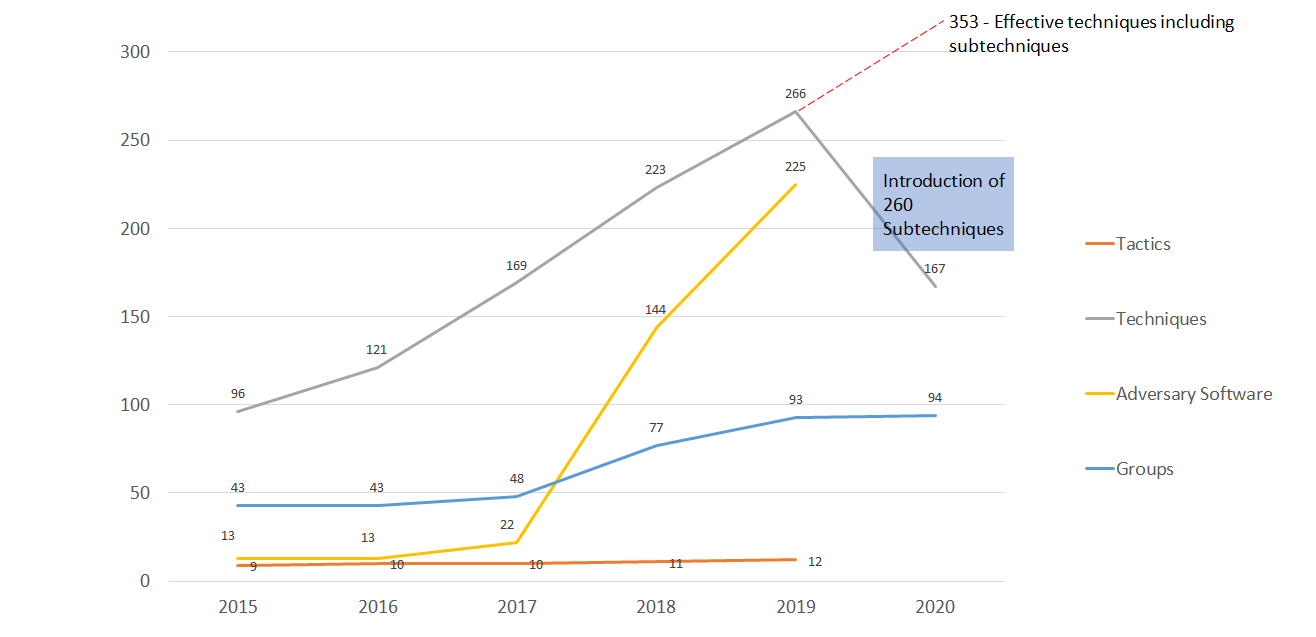

Trends in adversarial elements

Trends in adversarial elements

Since the framework is progressive and the content can get exhaustive to be maintained manually, Mitre has developed an online interactive navigator for the framework. It removes the need to maintain a spreadsheet manually every time to record a change.

How to use this framework

Identify the adversary groups that can target your organization. Mitre has maintained a list of the groups along with the targeted group history on its website. Map them into the attack simulator in different layers. The attack framework has an advantage that the users can correlate the TTPs across multiple layers.

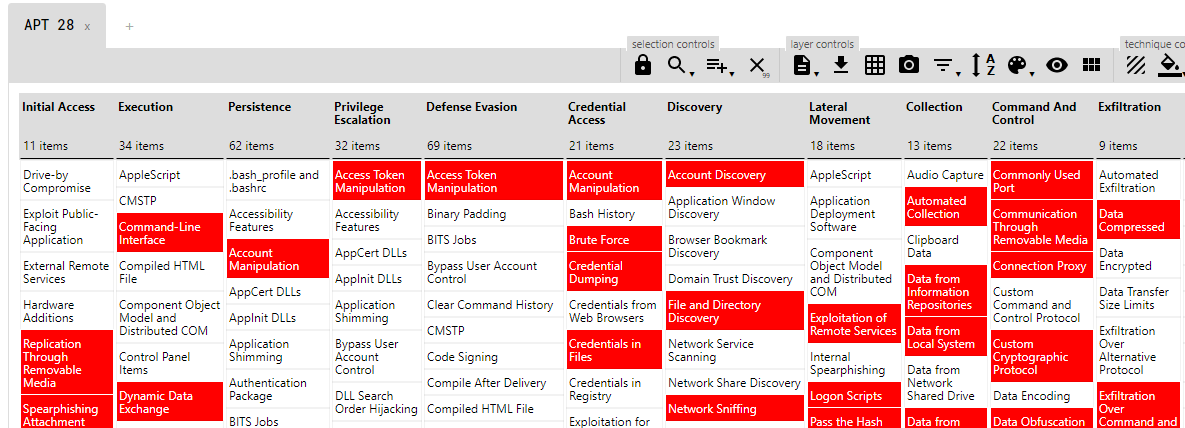

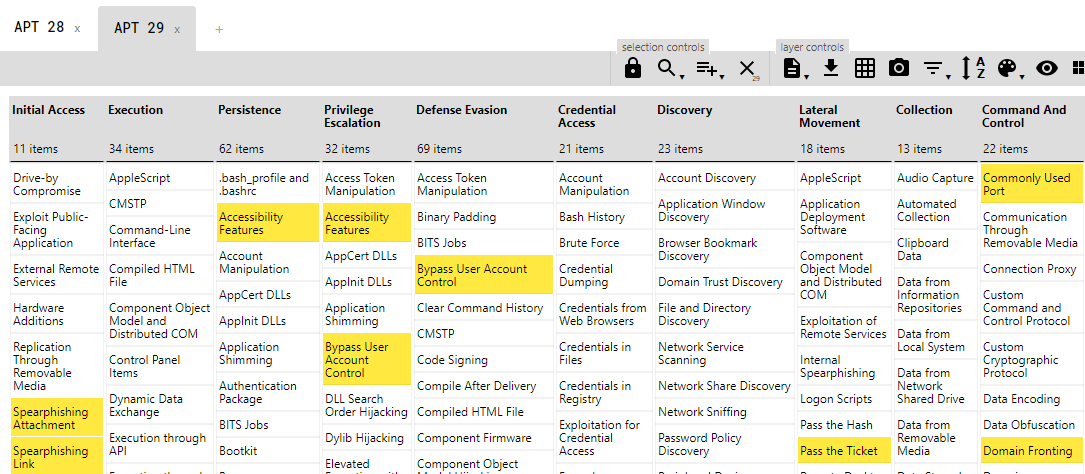

Let’s take an example - Take two adversary groups APT 28 and APT 29.

APT 28: Color for TTPs - Red, Score per TTP - 1

APT 29: Color for TTPs - Yellow, Score per TTP - 2

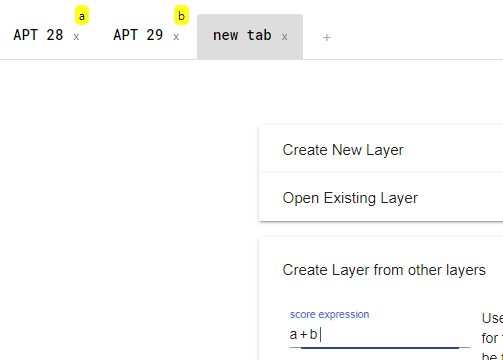

We can create a new layer that will have the common techniques, and which is based on the score of two other APT TTP layers.

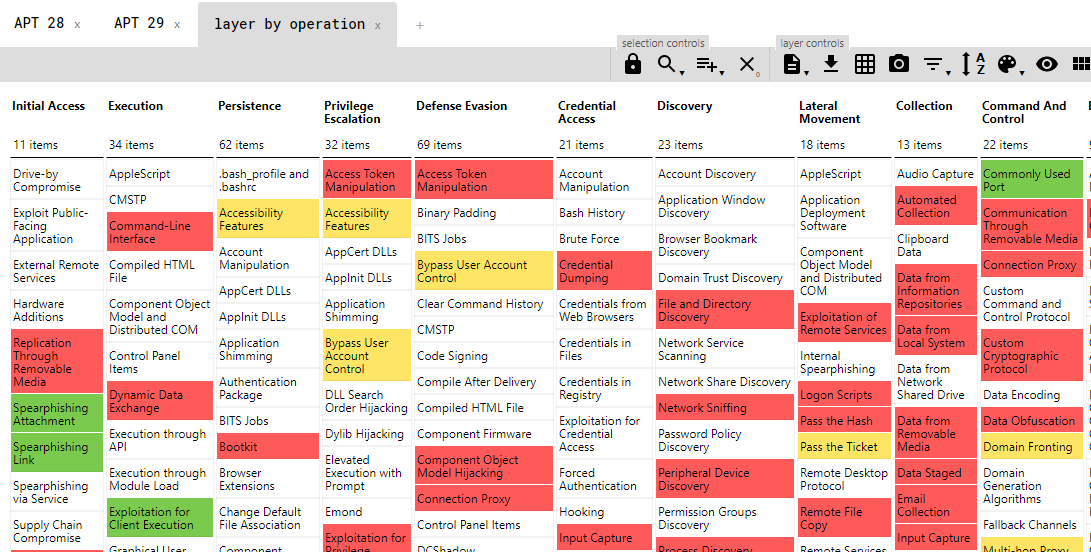

Here is the consolidated layer for APT 28 and APT 29.

Color: Green = APT 28 TTP Score (1) + APT 29 TTP Score(2)

Now red teams can see standard behavior across adversary groups and can initiate an effective simulation and detection activity.